Hand Tracking And Gesture Detection (OpenCV)

The aim of the project was to device a program that is able to detect out hands, track them in realtime and perform some guesture recognition. It is do be done with simple signal processing performed on images obtained from a regular laptop web-camera.It was an under 2 week project so theshold values in the code including the canny filter needs to be tweaked a bit more. It doesnt work well with changing background . If a better background detection and subtraction algorithm is used , you can get better results.

- Detecting Background Given the feed from the camera, the 1st thing to do is to remove the background. We use running average over a sequence of images to get the average image which will be the background too. The above equation works because of the assumption that the background is mostly static. Hence for those stationary item , those pixels arent affected by this weighted averaging and alpha * x+(1-alpha)x=x . Hence those pixels that are constantly changing isnt a part of the background, hence those pixels will get weighed down. Hence the stationary pixels or the background gets more and more prominent with every iteration while those moving gets weighed out. Thus after a few iterations , you get the above average which contains only the background. In this case , even my face is part of the background as it needs to detect only my hands.

- Background Subtraction A simple method to start with is we can subtract the pixel values.However this will result in negative values and values greater than 255, which is the maximum value used to store an integer. And what if we have a black background? Nothing gets subtracted in that case. Instead we use an inbuilt background subtractor based on a Gaussian Mixture-based Background/Foreground Segmentation Algorithm.Background subtraction involves calculating a reference image, subtracting each new frame from this image and thresholding the result which results is a binary segmentation of the image which highlights regions of non-stationary objects . We then use erosion and dilation to enhance the changes to make it more prominant.

- Contour ExtractionContour extraction is performed using OpenCV's inbuilt edge extraction function. It uses a canny filter. You can tweak paramemters to get better edge detection.

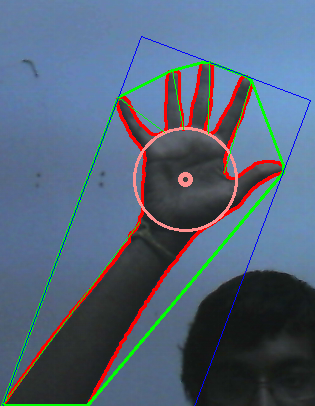

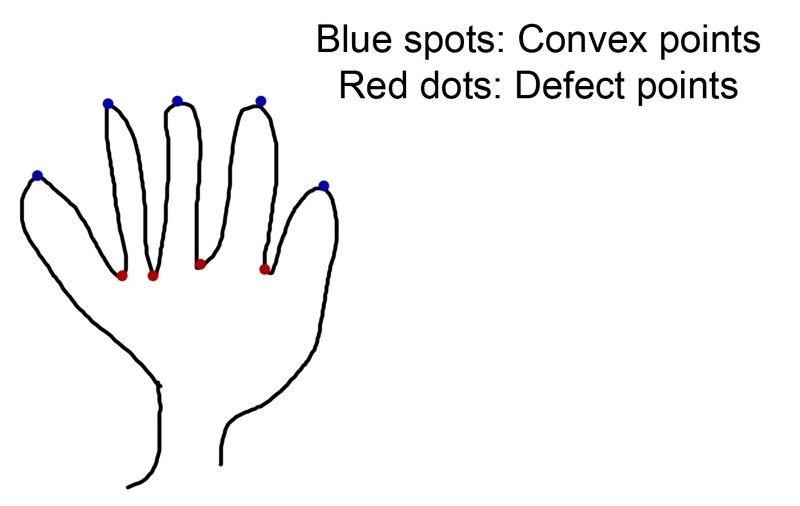

- Convex Hull and Defects

Now given the set of points for the contour, we find the smallest area convex hull that covers the contours . The observation here is that the convex hull points are most likely to be on the fingers as they are the extremeties and hence this fact can be used to detect no of fingers. But since our entire arm is there, there will be other points of convexity too. So we find the convex defects ie, between each arm of the hull, we try to find the deepest point of deviation on the contour.

5. Tracking and Finger Detection Thus the defect points are most likely to be the center of the finger valleys as pointed out by the picture. Now we find the average of all these defects which is definitely bound to be in the center of the palm but its a very rough estimate. So we average out and find this rough palm center. Now we assume that the palm is angled in such a way that its roughly a circle. So to find the palm center , we take 3 points that closes to the rough palm center and find the circle center and radius of the circle passing though these 3 points. Thus we get the center of the palm. Due to noise , this center keeps jumping , so to stabilize it , we take an average over a few iterations. Thus the radius of the palm is a indication of the depth of the palm and we know the center of the palm . Using this we can track the position of the palm in realtime and even know the depth of the palm using the radius. The next challenge is detecting the no of fingers. We use a couple of observations to do this. For each maxima defect point which will be the finger tip, there will be 2 minimal defect points to indicate the valleys. Hence the maxima and the 2 minimal defects should form a triangle with the distance between the maxima and the minimas to be more or less same. Also the minima should be on or pretty close to the circumference of the palm. We use this factor too. Also the the ratio of the palm radius to the length of the finger triange should be more or less same . Hence using these properties, we get the list of maximal defect points that satisfy the above conditions and thus we find the no of fingers using this. If no of fingers is 0 , it means the user is showing a fist.

In theory it sounds all perfect and good. The no of fingers given by the program is a bit so so. So use it at your own risk. I didnt get time to play with the thresholds. So I guess you should get pretty good results if you get the right thresholds like the ratio of radius to fingers, length of finger, etc. Currently with the program given, you can move the mouse pointer and click. The code is located at: https://github.com/jujojujo2003/OpenCVHandGuesture

The report is located here .